TLDR

- Anthropic’s Computer Use is a breakthrough in computer-use reasoning: Claude can interpret screen pixels and propose UI actions

- But a raw API isn’t a production agent. Enterprise deployment needs reliability, repeatability, and operational control.

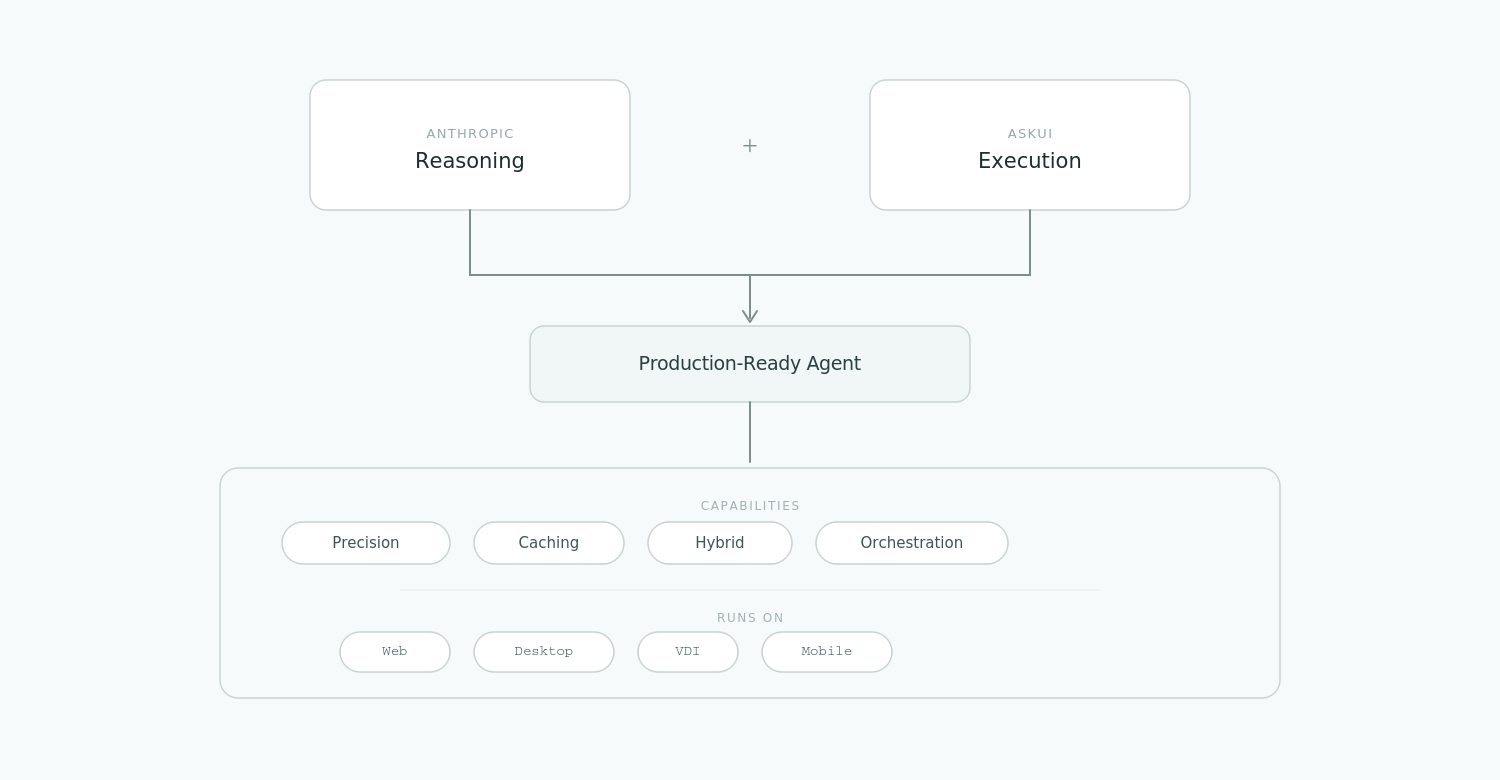

- AskUI provides the execution layer required to run Computer Use in production, combining AgentOS (running inside enterprise environments) with a hybrid execution system around Claude’s reasoning, including precision interaction logic, caching, and orchestration.

Why a Raw API is Not a Production Agent

Anthropic’s Computer Use unlocked a new class of computer use agents by letting Claude “see” the screen and infer how to interact with interfaces that don’t expose stable structured targets. But teams building directly on top of a raw computer-use API quickly run into enterprise realities:

- Cost and latency: Re-sending screenshots for repetitive steps can become slow and expensive at scale.

- Execution fragility: Pixel-sensitive interfaces (dropdowns, grids, small targets) can amplify small interaction errors into retries and flaky runs.

- Context fragmentation: The API operates in a per-step loop. Coordinating state across monitors, OS dialogs, and devices requires additional execution and orchestration infrastructure.

In other words, the model can decide what to do, but production teams still need an execution layer that supports reliability, governance, and repeatability across real enterprise infrastructure.

AskUI does not replace Anthropic’s reasoning. AskUI provides the execution layer required to turn Claude’s intent into a production-ready agent.

1. Enterprise-Grade Reliability: Precision & Intelligent Caching

Anthropic provides decision-making. Enterprise workflows still need reliable execution.

When workflows rely purely on screen-based interaction, small micro-errors compound into retries and flaky runs. And when a workflow repeats (login flows, navigation sequences), forcing the model to re-evaluate every step adds unnecessary latency and token cost.

AskUI addresses this at the execution layer:

Precision interaction logic

AskUI turns the model’s intent into grounded UI actions by adding execution-side interaction logic that reduces micro-errors on dense enterprise interfaces.

Intelligent trajectory caching

AskUI can record and replay learned trajectories for repetitive workflows. When the system recognizes a familiar sequence, it can execute the cached path quickly and rely on Anthropic reasoning when the UI state changes or a deviation appears.

2. High-Performance Engine: Structured Signals + Runtime UI Control

The most inefficient way to click a standard HTML button is to screenshot it, send it to a large model, wait for inference, and parse coordinates every time.

AskUI’s hybrid execution engine balances speed, cost, and reliability:

Structured signals first

In environments where structured signals are exposed and stable (for example in web contexts), AskUI uses them for fast, low-overhead execution.

Selectors and other structured signals are extremely useful when they are available and stable. The challenge in large automation suites is not selectors themselves, but the long-term maintenance required to keep them working as applications evolve.

As UI structures change, selectors break, test suites become brittle, and engineering teams spend increasing time maintaining automation instead of shipping software.

Runtime UI control when structure disappears

When the workflow reaches environments where structured targets are unavailable or unreliable such as Citrix/VDI sessions, canvas-heavy interfaces, or OS-level permission dialogs, AskUI switches to runtime UI control and leverages Anthropic’s Computer Use to interpret the visible interface and drive actions.

This hybrid execution model allows automation to remain fast when structure exists and resilient when it does not.

3. Enterprise-Scale Orchestration Across Real Infrastructure

A raw computer-use API executes one action per inference step. Real enterprise workflows require continuity across multiple environments and system surfaces.

AskUI provides orchestration capabilities to coordinate workflows across:

- Multi-monitor setups

- Desktop operating systems (Windows, macOS, Linux)

- Virtualized environments such as VDI or Citrix

- Supported mobile environments when screen access and input control are available

AskUI maintains execution state and connects steps across systems into one continuous workflow.

Architectural Synergies: Anthropic Reasoning + AskUI Execution Layer

| Dimension | Raw Anthropic Computer Use API | AskUI AgentOS (with Anthropic) |

|---|---|---|

| Execution speed | Latency-bound by model inference for each step | Faster execution through hybrid execution and cached trajectories |

| Token cost | Often higher when every step requires a screen-to-model loop | Can be reduced when structured signals or cached paths can execute without a model call |

| Precision | Best-effort coordinate outputs | Execution logic that reduces retry loops and interaction errors |

| Workflow scope | Per-step interaction loop | Stateful orchestration across OS surfaces and devices |

| Best fit | Exploration and experimental automation | Production-grade automation under enterprise constraints |

Conclusion

Anthropic’s Computer Use is a significant step forward for software-interacting AI systems. It enables models like Claude to interpret interfaces and perform actions in environments where traditional automation tools struggle.

However, production environments require more than reasoning alone. Automation must be reliable, observable, cost-efficient, and capable of operating across complex infrastructure.

AskUI provides the execution layer that makes this possible.

By combining structured signals where they are stable with runtime UI control where they are not, AskUI enables computer use agents to operate reliably across real enterprise systems.

Anthropic provides the intelligence. AskUI provides the infrastructure that allows that intelligence to run in production.

FAQ

Q1: Does AskUI replace Anthropic’s Computer Use? A: No. Anthropic’s Computer Use provides the reasoning capability that allows Claude to interpret screens and propose actions. AskUI provides the execution layer that makes those actions reliable and repeatable in production environments.

Q2: If Claude can already output coordinates, why is an execution layer needed? A: Coordinate predictions alone are not always sufficient for production workflows. Small inaccuracies can lead to retries, and repeated steps can introduce unnecessary latency and token cost. AskUI adds execution-side interaction logic and trajectory caching to improve precision and reduce redundant model calls.

Q3: What is trajectory caching in AskUI? A: Trajectory caching lets AskUI record and replay a known UI flow for repeat tasks, reducing repeated model calls on familiar steps. If the UI changes and the cached trajectory no longer applies, AskUI falls back to model reasoning to re-plan the flow and update execution.

Q4: Can AskUI run in enterprise environments such as VDI or Citrix? A: Yes. AskUI is designed to operate across environments where screen access and input control are available, including virtualized environments such as VDI or Citrix sessions.

Q5: When should teams use the raw Anthropic Computer Use API versus AskUI? A: The raw API is useful for experimentation and prototypes. AskUI becomes valuable when teams need an execution layer to run agents reliably across real environments.

Disclaimer: Anthropic and Claude are trademarks of Anthropic PBC. AskUI is an independent entity and is not affiliated with, sponsored by, or endorsed by Anthropic PBC.

YouYoung Seo

YouYoung Seo