Introduction: The "Demo Trap" of Computer Use Agents

The era of Computer Use Agents (CUA) has officially arrived. We’ve all seen the viral demos: AI agents browsing the web or booking flights in real-time. However, enterprise leaders are discovering a painful reality. Agents that shine in a controlled demo often break under production constraints.

The failure isn't in the AI's intelligence. It’s in the lack of a robust architectural bridge between reasoning and physical infrastructure. To build a production-ready agent, you must evaluate it through the 3-Layer Architecture.

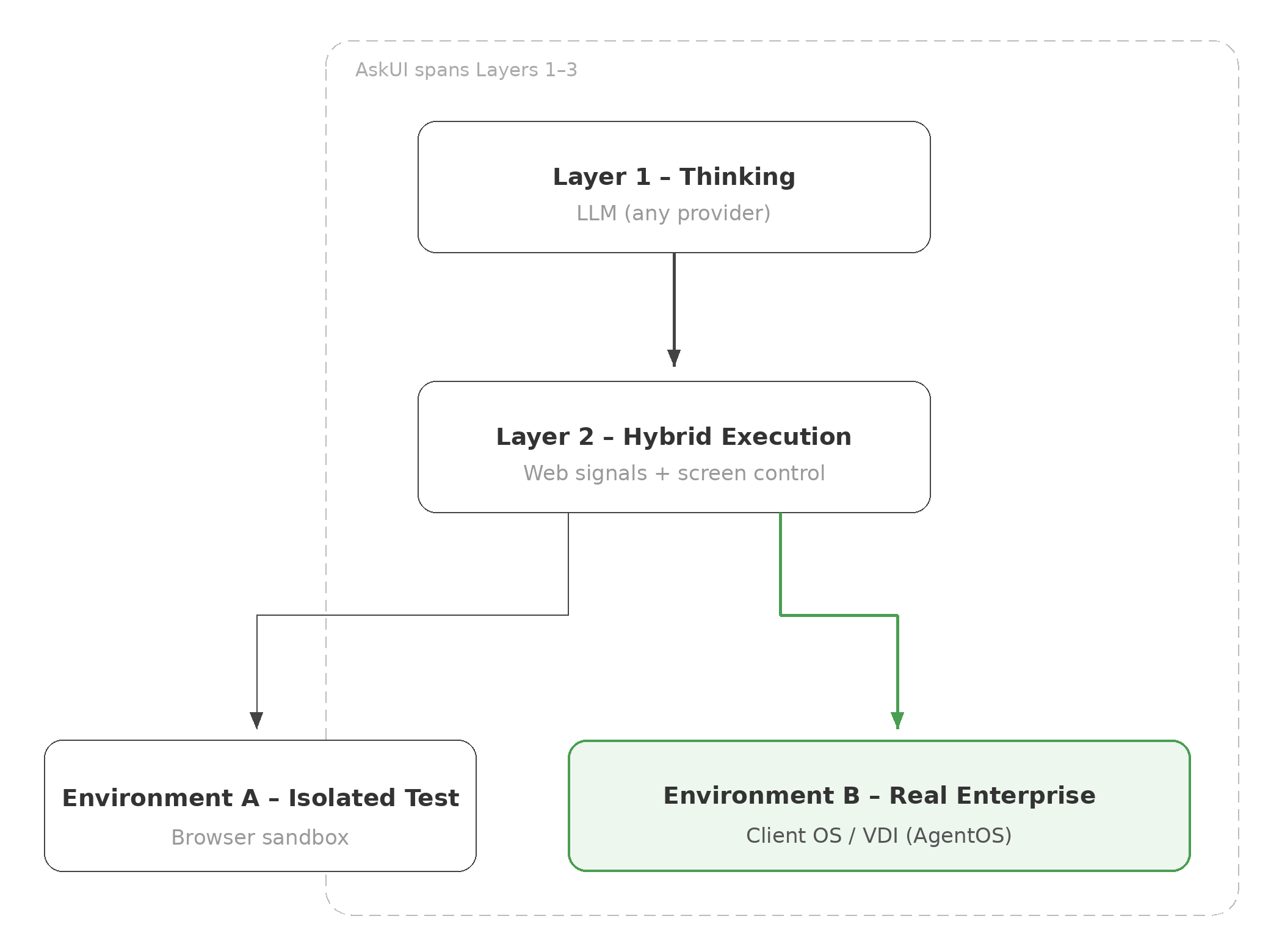

1. Layer 1: The Thinking Layer (The Brain)

The Thinking Layer is the reasoning engine responsible for high-level planning.

- Model Agnosticism: AskUI is built to be model-agnostic. While we host and support frontier models like Claude and GPT, our architecture allows you to plug in any provider or custom model that fits your security needs.

- The Reality: The "brain" is becoming a commodity. Whether you use OpenAI or Anthropic, the intelligence remains trapped unless it has a functional body to interact with the real world.

2. Layer 2: Hybrid Agentic Engine (The Hands)

The Hybrid Agentic Engine translates abstract plans into physical actions. Many agents struggle here because they rely on a single execution mode, either browser-only automation that can break once workflows move into desktop, VDI, or legacy systems, or screenshot-based approaches at scale that can add latency and limit practical end-to-end execution.

- Embracing Web Frameworks: Modern web automation frameworks provide speed and precision when structured signals such as DOM or selectors are available. AskUI leverages this signals where applicable to maximize efficiency in browse-based environments.

- The AskUI Hybrid Approach: Rather than relying on a single execution mode, AskUI adapts to the environment. When structured web signals are available, we utilizes framework such as Playwright for deterministic interaction. When those signals are unavailable such as in legacy apps or VDI sessions, AskUI transition to screen-based execution with native input control visa AgentOS.

- Proven Performance: In the OSWorld Benchmark (Screenshot category), the AskUI Agentic Engine scored 66.2, outperforming OpenAI (42.9) and Anthropic (28). This supports why hybrid execution is well-suited for complex enterprise workflows.

3. Layer 3: The Execution Environment (The Workspace)

This is the "ground" where the agent stands, one of the biggest hurdles for enterprise security and integration.

- The Value of Sandboxes: Many agents run inside isolated cloud containers or browser sandboxes. This can be a safe way to test autonomy in a “driving simulator,” but it may be disconnected from internal systems and real enterprise workspaces. In some scenarios, sandboxing isn’t always feasible. For example, in SIL/HIL setups, the test bench is physical and must be operated in the real environment.

- The AskUI AgentOS: AskUI provides AgentOS, a native runtime designed to execute inside customer-managed environments (end-user devices and VDI sessions), not just isolated test setups.

- Enterprise Security: With on-prem options and execution on managed infrastructure, teams can keep sensitive workflows within their security perimeter and support governance and data residency requirements.

Conclusion: From a "Genius in a Box" to a "Production Employee"

The AI industry is full of “Geniuses in a Box”, exceptionally intelligent LLMs that are often confined to browser tabs and virtual sandboxes. These agents can be impressive for research and isolated testing, but they frequently struggle to deliver end-to-end business value once they must operate across the complex, fragmented infrastructure of a real enterprise.

For a CTO, the goal isn't just to have a smart AI. It’s to have a productive digital employee. AskUI AgentOS bridges this gap. By combining a model-agnostic Thinking Layer, a Hybrid Agentic Engine, and a native Execution Environment, we provide a production-ready solution that moves beyond the "driving simulator" and onto the "real highway" of your business. We don't just show you what AI can do in a demo, we enable it to work where your enterprise actually runs.

FAQ

Q1. Why do most AI agents fail when interacting with legacy Windows apps or VDIs? A: Many agents rely on browser-native or code-based signals (HTML/DOM). In legacy Windows apps or VDIs where those signals aren’t available, reliability can degrade and actions may fail. AskUI addresses this with a hybrid execution approach. We leverage code-based signals when available for speed and precision, and fall back to screen-based understanding and native input control when they are not.

Q2. How does AskUI handle security and data privacy in an enterprise environment? A: AgentOS can run inside customer-managed environments, so execution and data handling can stay within your security perimeter and align with governance and data residency requirements.

Q3. Can I integrate my own choice of LLM with AskUI? A: Absolutely. AskUI is model-agnostic. We provide the Execution Layer (the “body”) so you can connect the Thinking Layer (the “brain”) you prefer, Anthropic, OpenAI, or other providers. For stricter privacy and control, teams can also use self-hosted or custom models where supported.

Q4. What is the significance of AskUI's OSWorld Benchmark score? A: OSWorld is a benchmark that evaluates multimodal agents on real operating systems (Windows/macOS/Linux) using execution-based tasks across arbitrary apps. AskUI’s performance matters because it aligns directly with our focus on reliable OS-level execution across real enterprise workflows, not just browser-only demos. A strong OSWorld score is a practical signal that our hybrid engine with AgentOS can ground actions on the screen and carry tasks across apps and environments, where enterprise work actually happens.